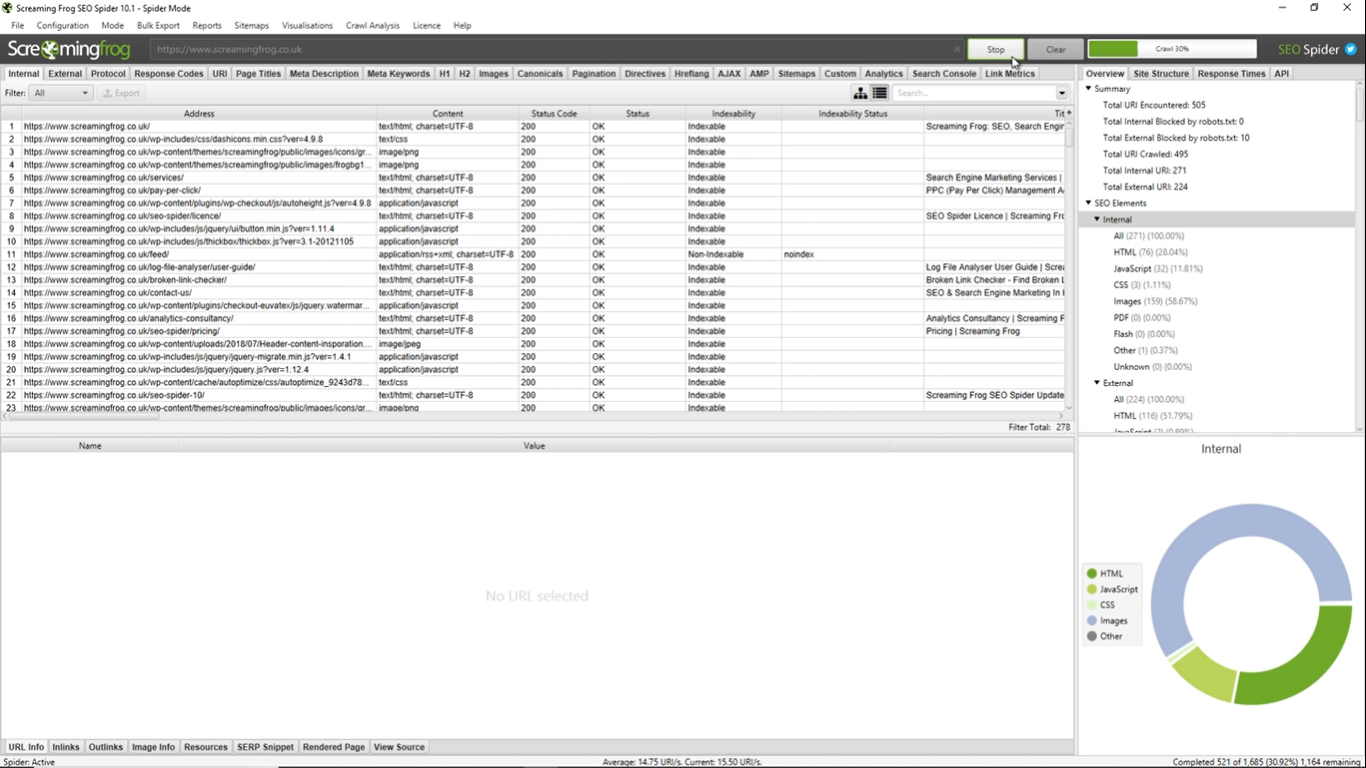

Then I opened the SEO Spider and built a new configuration file with all crawling options deactivated except for the following: (You can gather these from an existing Spider mode crawl). In my example, I took some URLs from our firm’s blog, created a file list.txt and saved URLs into it. The primary data source is an export of a scheduled SF crawl. Screaming Frog are planning to add this in the future, but until that time, follow this guide. To have it fully automated we also need this data to be appended (not overwritten) to a master Google Sheet.

In order to use crawl data within a time-series in Data Studio, we need a Google Sheet export with a date/time dimension added when the crawl was run. This lets you gather data natively from a crawl, by an API connector, or even scraped by custom extraction – all of which can be reported and compared over time within Data Studio automatically. Looking to integrate your crawl results with Google Data Studio? Here’s a quick guide on how to build fully automated time-series reports with Data Studio, based on scheduled Screaming Frog crawls. View our updated guide on How To Automate Crawl Reports In Data Studio. Update: With the release of version 16 of the SEO Spider, you can now schedule a bespoke export designed for Data Studio integration directly within the tool.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed